Bio

I am currently an Applied Scientist II at Microsoft AI. I obtained my PhD in Electrical and Computer Engineering in June 2025 from UT Austin, advised by Prof. Haris Vikalo. I was also a member of the WNCG lab (Wireless Networking and Communications Group). Before joining UT Austin, I obtained my B.Eng degree from the department of Electrical Engineering and Automation, South China University of Technology.

Research Interests

My research interests lie in LLM post-training and efficient LLM, with a focus on making large language models more capable, reliable, and deployable. Specifically, I am interested in:

1) LLM post-training: SFT, reinforcement learning, and training efficiency.

2) Efficient LLM: inference acceleration via quantization, pruning, and distillation.

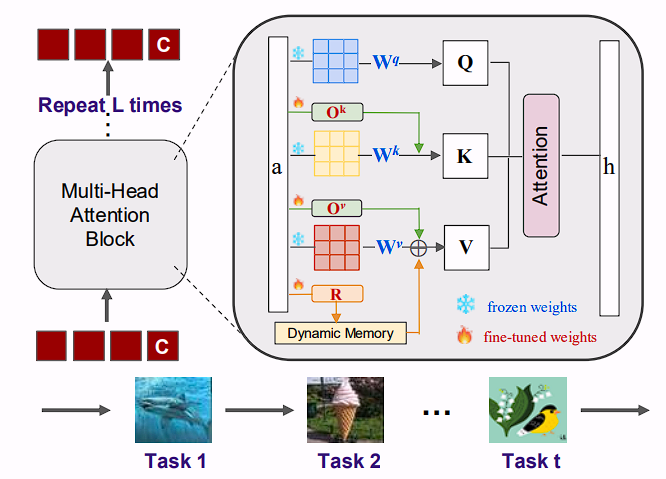

3) Continual learning: fine-tuning pretrained LLM and VLM on downstream tasks.

4) Trustworthy AI: privacy-preserving machine learning and model safety.

Research statement can be found in PDF.

News

March, 2026 I joined Microsoft AI as Applied Scientist II.

March, 2026 Two papers accepted in CVPR2026 workshops.

March, 2026 One paper about long-horizon LLM agents in arXiv.

February, 2026 One paper about LLM quantization in arXiv.

January, 2026 One paper was accepted in ICLR2026.

September, 2025 One paper about continual learning in arXiv.

June, 2025 I joined Accenture as Senior AI Research Scientist.

June, 2025 Passed my PhD Defense!.

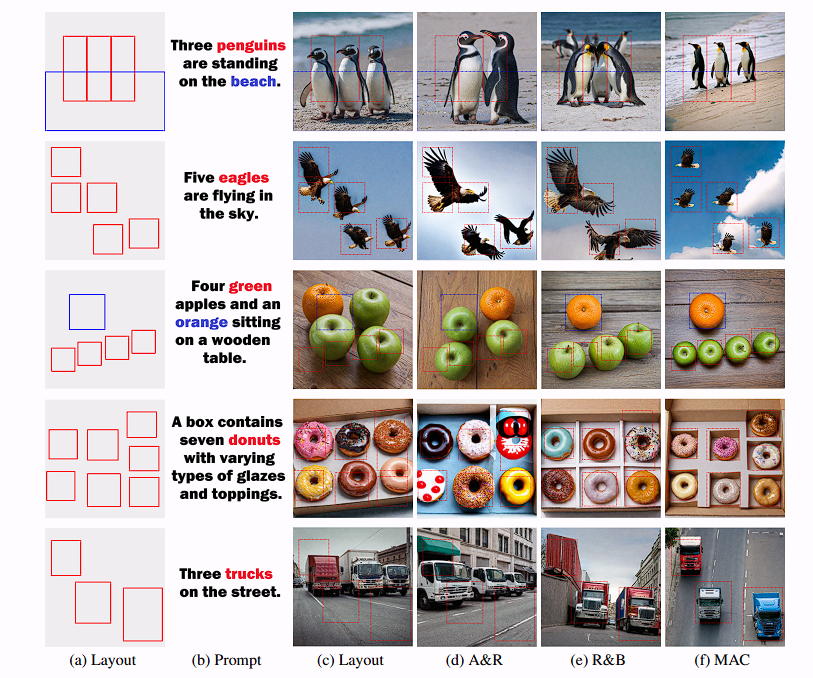

November, 2024 One paper about layout-to-image based on diffusion models in arXiv.

September, 2024 One paper about continual learning on foundation models in arXiv.

November, 2024 Pass my Ph.D progress review.

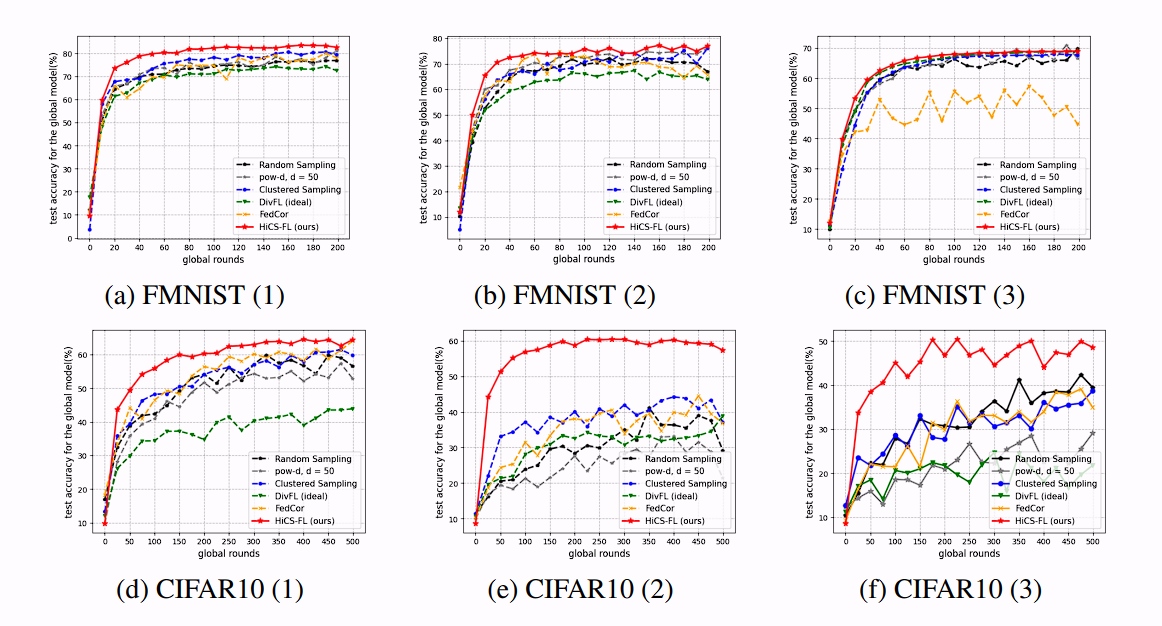

September, 2024 One paper accepted in NeurIPS2024.

May, 2024 One paper accepted in ICML2024.

February, 2024 One paper accepted in CVPR2024.

February, 2024 Joining PPML team in SonyAI as research intern.

November, 2023 One paper about mixed-precision quantization preprinted in arXiv.

September, 2023 One paper about client selection preprinted in arXiv.

March, 2023 One paper accepted in CVPR2023 workshop.

Jan, 2023 One paper accepted in ICLR2023.

Vitæ

Full Resume in PDF.

-

Microsoft AI March 2026Applied Scientist II

Mountain View, California -

Accenture June 2025 – March 2026Senior AI Research Scientist

Advanced AI Center -

SonyAI Spring 2024Research Internship

PPML team -

Toyota InfoTech Labs Summer 2022Research Internship

AI/ML Infrastructure & Data Lab -

Nokia Bell Lab Spring 2022Research Internship

Mathematics & Algorithms Research Group -

University of Texas at Austin 2020 – 2025Ph.D. Student

Electrical and Computer Engineering

Advisor: Haris Vikalo -

South China University of Technology 2015 - 2019B.Sc. Student

Electrical Engineering

Rank 2/304

Teaching

TA for CS395T, 2020 Fall: Foundation of Predictive Machine Learning

TA for EE381K, 2021 Spring: Statistical Machine Learning

TA for EE422C, 2021 Summer: Software Design and Implementation II (Java)

TA for EE380L: 2021 Fall: Data Mining

TA for CS395T, 2022 Spring: Convex Optimization

TA for EE351M, 2022 Fall: Digital Signal Processing

Service

conference reviewer: ICML(22,23,24,25,26), NeurIPS(22,23,24,25), ICLR(24,25), IJCAI(24,25), AAAI(25), CVPR(25).

journal reviewer: IEEE TMC

Skills

Programming Languages: Python, Java, C/C++, SQL, LaTeX

Softwares: Pytorch, Tensorflow, Transformers, Linux, AWS, Google Cloud, Matlab, Git, Docker